AI and the future of education

Children's AI use, otters, Geminification of education, AI as a political football, trust, power and revolutions, give it a try and much more...

What’s happening

The AI and the Future of Education: Disruptions, Dilemmas and Directions webinar took place yesterday, with an international group of speakers – Bryan Alexander Helen Beetham, Laura Hilliger, Ian O'Byrne, Karen Louise Smith and Doug Belshaw. They have each responded to UNESCO’s call for critical reflections that consider the longer-term educational implications of AI, and all their individual contributions are available here.

Brought together, it was a fascinating hour of conversation covering everything from the fragility of the AI industry business model, the need to rethink higher education’s assessment system from top to bottom, the importance of not making AI literacy the responsibility of individual students and educators, why students should understand the valuation of a company such as Turnitin, AI as an unethical social technical system, why we must deliberately cultivate safe spaces for students and develop assignments where AI applications are limited or less relevant …and much more.

It was a bit late in the day to write up for this week’s newsletter, but we should have a more in-depth report next week, so, for now, on with this week’s news and views.

AI roundup

The AI carbon debate continues

MIT has published an in-depth story on AI’s emissions load, and how difficult it is to assess. But it is also worth reading the author’s three key takeaways in The Algorithm:

AI is in its infancy and its future is not predetermined. The trends (reasoning models and new hardware devices) point to a more energy-intensive future, but the models, chips, and cooling methods behind the AI revolution could also all grow more efficient over time.

The energy demands of AI video are immense: the energy required to produce even a low-quality, five-second video is 42,000 times more than the amount needed for a chatbot answer a question about a recipe, and enough to power a microwave for over an hour.

There are more important questions than users’ individual carbon footprints from using AI (as per Andy Masley’s cheat sheet): there are global forces shaping how much energy AI companies are able to access and what types of sources will provide it. There is also very little transparency from leading AI companies on their current and future energy demands.

There’s more in MIT’s new series, Power hungry: AI and our energy future.

Otterly interesting

Ethan Mollick explains why ‘otter on a plane using wifi’ became his go-to test for benchmarking AI image generation over the years and shows just how far these models have come.

He notes that the otter evolution shows two crucial trends with big implications. Firstly, the rapid improvement across a wide range of AI capabilities from image generation to video to LLM code generation. Second, ‘open weights models’ (which can be downloaded, modified, and run by anyone, anywhere, unlike proprietary AI models such as Midjourney or ChatGPT) are often only months behind the state-of-the-art. This has clear implications for society:

“If you put these trends together, it becomes clear that we are heading towards a place where not only are image and video generations likely to be good enough to fool most people, but that those capabilities will be widely available and, thanks to open models, very hard to regulate or control. I think we need to be prepared for a world where it is impossible to tell real from AI-generated images and video, with implications for a wide swath of society, from the entertainment we enjoy to our trust for online content.”

Children’s AI use

An interesting Alan Turing Institute report is calling for a greater focus on children's AI use and involving children in decision-making about AI. The survey it draws on finds that nearly one in four children (22%) aged 8-12 are using the technology, with four in 10 use it for creative tasks such as making fun pictures, to find out information or learn about something, and for digital play. Over half of teachers (52%) are concerned by the increase in AI-generated work being submitted as the child’s own (which seems to us to be a surprisingly low number). Generative AI use is widespread among teachers too, with three out of five using the technology in their work, most commonly for lesson planning and research. The recommendations highlight the need for policymakers to actively seek children’s perspectives and learn from their experiences in policymaking processes relating to AI, ensuring their rights and needs are not overlooked in decision-making. Other recommendations include improving AI literacy as part of the wider curriculum, addressing issues of bias, plagiarism and environmental impacts, and ensuring responsible use of generative AI among teachers.

Raspberry Pi seminar on AI and children

Raspberry Pi’s next online seminar on AI research is on 17 June at 17:00 BST and features Netta Livari (University of Oulu). She will share examples of how educators can support children’s transformative agency within computing education, specifically focusing on how to address AI and invite children to critically analyse and design their future with AI. Find out more and sign up.

The Gemini-ification of education

Ben Williamson has things to say about Google’s latest learning report in which it announces that it is “infusing LearnLM directly into Gemini 2.5, which is now the world’s leading model for learning”. Williamson says “make no mistake - it's promoting Gemini as an automated infrastructure for schooling” and queries the absence of the social elements of education.

Quick links

In Digital Media Use in Early Childhood, authors including Sonia Livingstone examine how children under six interact with digital media, exploring both positive and adverse impacts at home, at school and elsewhere. This LSE Review of Books review sets out its strengths.

And more from Sonia Livingstone in this very thorough post calling for more meaningful and rights-respecting opportunities for child and youth participation in research, policymaking, and product design.

Joe McLean says that DfE is signalling that digital literacy will become more important in the curriculum and make six digital infrastructure elements mandatory by 2030.

Computing at School is holding a conference for sixth form computing students at UCL East in Stratford, London on 19 June.

The Education Technology Society podcast looks at what schools can do about digital disinformation in the age of AI. The guest is Professor Olof Sundin (Lund University), who has been researching students’ (dis)information literacy since the early 2000s.

We’re reading, listening…

TechShock from Parent Zone

Natalia Kucirkova, Professor of Early Childhood and Development at the University of Stavanger, Norway, talks to Parent Zone CEO Vicki Shotbolt about whether possible harms warrant us taking the ‘precautionary principle’ when it comes to edtech in schools and how exactly ‘transparency’, ‘accountability’, and ‘fairness’ play into principles of ‘ethical’ edtech.

A political football

In a week when the UK government suffers a fifth defeat in the House of Lords over its plans to allow AI companies to use copyrighted material, Politico takes an in-depth look at how an initially uncontroversial data bill became a political football – and radicalised Elton John.

Yuvall Noah Harari on trust, power and revolutions

This week’s Possible podcast features an interview with Yuval Noah Harari (plus transcript) on the importance of building human trust in the use and creation of AI. Harari warns,

“If the AI tycoon himself behaves in an untrustworthy way, and if he himself believes that all the world is just a power struggle, there is no way that he can produce a trustworthy AI.”

What AI won’t change

Stephen Bush in the FT (paywall but registration gives a few free reads a week) tackles the question of whether schools should be equipping children with the skills they will need in the future or giving them a broad foundation of knowledge. In a rather odd argument, he comes down on the side of knowledge but also acknowledges that “in some ways, the distinction between skills and knowledge is a false binary”.

“One reason why ‘knowledge-rich’ curriculums have outperformed ‘skills-based’ programmes is that we are terrible at predicting the future... What “skills” will today’s children need in the world of AI? History teaches us that those we once thought would ensure a reliable income forever are no guarantee of any such thing.”

‘One child in poverty is too many’

OK, this Guardian feature on Slovenia is not about digital and only tangentially about education but, as we await Labour’s strategy on child poverty, it’s an instructive insight into a refreshing approach to ensuring that all children get the best chance in life.

Give it a try

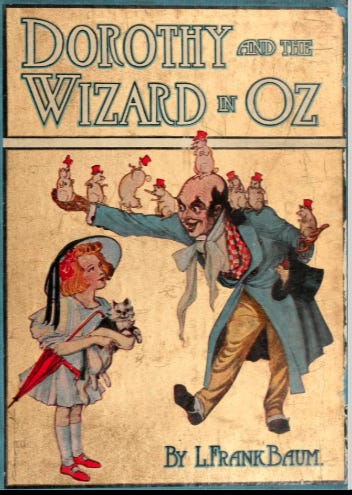

Picturing children's stories

This lovely citizen science project aims to deepen understanding of how children's book illustrations have changed over the past two centuries. It’s collecting detailed descriptions of image content and emotional tone in historical picture books to allow scholars to trace how illustrators have depicted childhood, storytelling and emotion across time.

The books in the project come from the Children's Book collection of the Internet Archive, and the data will help to develop AI tools that can extend this research to large digital libraries around the world.

The project emphasises that “our goal is not to generate art with AI, but to use it as a tool for exploring cultural history. Like all Citizen Reader projects, Picturing Children’s Stories is about expanding human knowledge of storytelling”.

Connected Learning is by Sarah Horrocks and Michelle Pauli