No 101: An edtech tragedy?

The impact of Covid remote schooling, NotebookLM data warning, cognitive stunting, digital 'school wars', more Borges, the Fish Doorbell, and much more...

What’s happening

An ed-tech tragedy? Educational technologies and school closures in the time of Covid-19.

This interview on the Education, Technology, Society podcast resonated for Sarah, bringing back experiences of supporting schools in the UK and in Jordan with remote learning during the Covid-19 pandemic and devising the BlendEd initiative; a programme of professional development in blended learning pedagogy commissioned by IBM UK Charitable Trust. Sarah and the CLC also took part in Co-Learn, an Erasmus project looking at blended learning pedagogy and delivery during and after the pandemic in Sweden, Denmark, Finland, the UK and the Netherlands. Teachers and headteachers explored themes such as pace, place and path, how student-teacher feedback and dialogue can be supported by technology, how technology can enhance peer-to-peer feedback, and whether digital communities of practice can enhance student learning.

In his book An Ed-Tech tragedy? Educational technologies and school closures in the time of COVID-19, Mark West, from UNESCO argues that we should look back on Covid remote schooling as an edtech tragedy and use our pandemic experiences to develop radically different visions of digital education. Mark’s book (which is free to access) describes how the pandemic fundamentally changed our dependency on technology platforms and products, with billions of dollars rushed into purchasing edtech infrastructure that schools are now stuck with. He also argues that the Covid experience provides us with valuable lessons about the frailties and unfairnesses of edtech.

Listening to Mark and reading extracts from his book made Sarah reflect on the success of the UNICEF Learning Bridges programme which she and her Connected Learning Centre colleagues contributed to. What distinguished this ambitious initiative to teach over 600,000 children in Jordan remotely was that Learning Bridges was designed as a programme that could be fully paper-based, with QR codes providing links to additional resources provided online. This was intended to overcome the lack of technology faced by low-income families and families living in remote areas.

In his book, West shows that in contrast, much of the global evidence reveals a sombre picture of how increasing dependence on edtech led to “unchecked exclusion, staggering inequality, inadvertent harm and the elevation of learning models that put machines and profit before people.”

As we face yet more global crises, West’s conclusions are salient:

“Crises that necessitate the prolonged closure of schools and demand heavy or total reliance on technology have been exceedingly rare historically. Future crises may present entirely different challenges. The trauma of the pandemic has, in many circles, functioned to elevate technology as an almost singular solution to assure educational resilience by providing flexibility in times of disruption. Investments to protect education wrongly shifted away from people and towards machines, digital connections and platforms. This elevation of the technical over the human is contradictory to education’s aim to further human development and cultivate humanistic values. It is human capacity, rather than technological capacity, that is central to ensuring greater resilience of education systems to withstand shocks and manage crises.”

AI roundup

Britons and tech

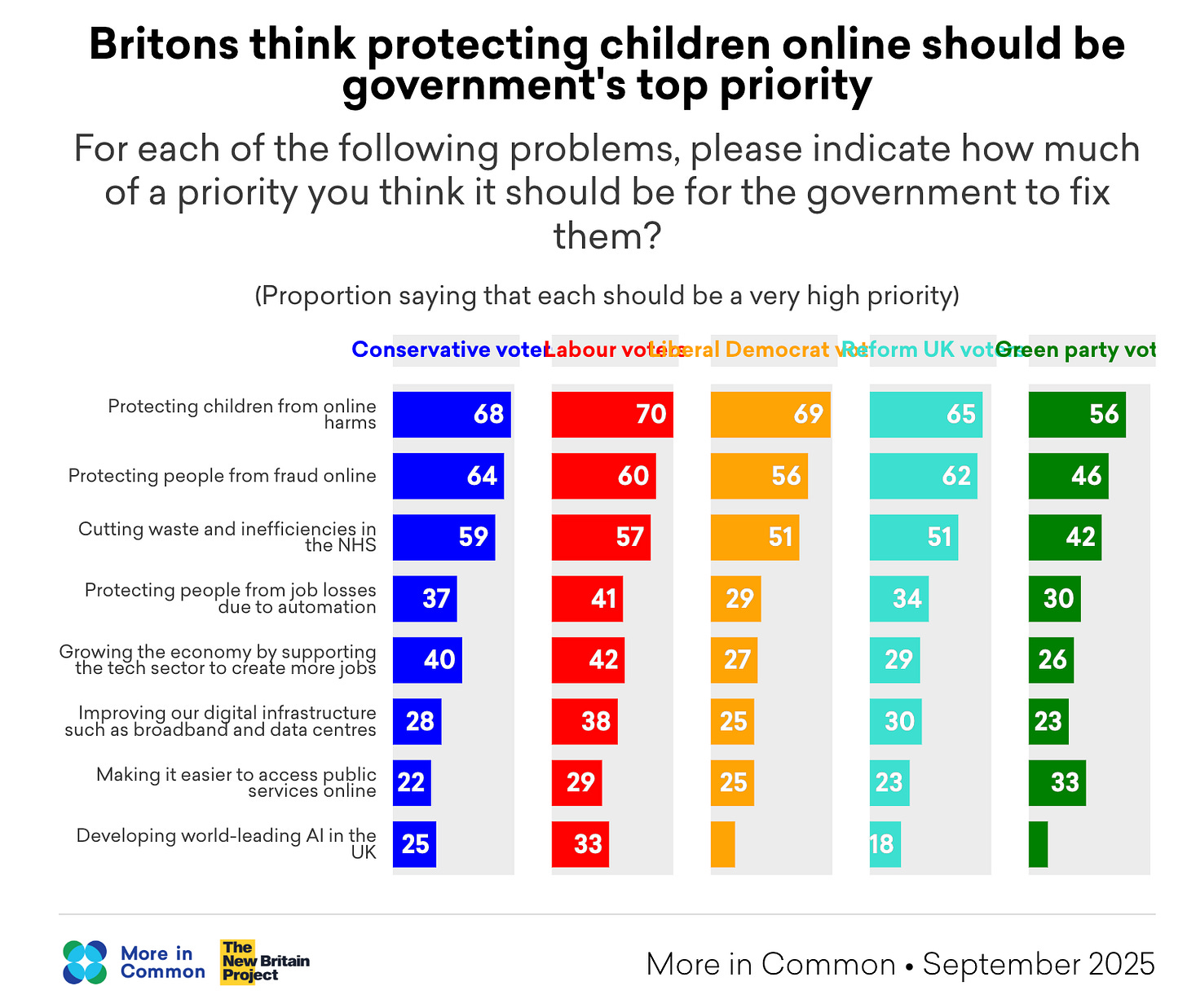

There’s an urban-rural divide in Britain across views of the merits of new technology, according to new research from More in Common. However, Britons are far more likely to say that technology makes them feel connected to others, rather than lonelier, especially among the younger generations. There’s an even stronger divide when it comes to AI, with Gen Z twice as likely as the rest of the public to use AI to learn, create, write, or even provide emotional support. Older generations (Gen X (!) and up), meanwhile, say that they use AI sparingly if at all. Of all the roles respondents would least like to see replaced with AI, teachers win resoundingly, with 69% saying that we should never explore or consider AI chatbots instead of teachers in the classroom. Protecting children from harm is the top priority for government in relation to technology:

Notebook LM data warning

Matthew Wemyss has shared an important post explaining why it’s not OK to upload an EHCP to NotebookLM to get a quick summary. Even though Google states that NotebookLM will not use uploaded documents for training its AI models, and so it seems to be safe, “not used for training” and “handled in compliance with GDPR” are two very different things. EHCPs contain Special Category Data under GDPR.This creates two serious compliance problems: data leaves your setting’s controlled environment, and you lose organisational oversight. He concludes, “Even if your school has Google Workspace for Education, uploading an EHCP to NotebookLM is a compliance failure waiting to happen. There is no lawful basis that comfortably covers processing Special Category Data about children through a tool where you cannot control data residency or demonstrate adequate governance. The 30 seconds you save isn’t worth the risk to a child’s data, or your school’s reputation.”

Meanwhile, Philippa Hardman has a NotebookLM for learning design 101 with five evidence-based methods to try.

How to avoid cognitive stunting

We regularly feature the work of Rebecca Winthrop, who leads the Center for Universal Education at the Brookings Institution, and recently published a report called A New Direction for Students in an AI World. She and her colleagues conducted an extensive ‘pre-mortem’ of AI in the classroom, speaking with hundreds of educators, students, policymakers and technologists worldwide. In this Undivided Attention podcast from The Center for Humane Technology, Rebecca shares her findings in a really practical way based on real classrooms and conversations with young people. She says that the way both teachers and children are using AI is eroding relational trust between them. Her findings show that narrow strategic use of AI with vetted content can be beneficial but that open unstructured use of AI can impair children’s cognitive ability for independent learning and thinking and to take critical feedback. She calls it ‘cognitive stunting’. She calls for 1) shifting what teaching and learning looks like so it’s not hackable by AI, 2) preparing all staff in schools and education systems (as well as parents) to understand how to use AI well 3) have clear regulation and guard rails established in schools and organisations overseeing schools.

Time to swap out ChatGPT?

Historian Rutger Bregman thinks so and urges a boycott to send a message to Silicon Valley. He notes, “I want to be clear: I am not anti-AI. I use AI tools in my work every day. This is not about rejecting technology. It is about rejecting the idea that we have no choice but to fund a company that’s bankrolling authoritarianism.”

Learning to program with LLM tools at KS5

Raspberry Pi is launching a new research study for Key Stage 5 (A-level/Scottish Highers) Computer Science teachers in England, Scotland and Wales. It aims to develop a teaching framework and resources for using LLM tools in programming, covering how they work, safe and effective use, and helping students critically evaluate AI outputs. The study involves two in-person workshops in 2026 and 2027, and teachers can apply via this form.

Quick links

Teaching students how social media algorithms work can change how they engage with what they’re shown, writes Tracey Neale, assistant headteacher at Ysgol Gyfun Cwm Rhymni in Caerphilly, Wales. She references the Safer Scrolling report by UCL and Kent University and argues that it’s essential to include boys as part of discussions regarding online misogyny, as hardline approaches can entrench negative ideologies and ways of thinking.

Another reminder that Molly v the Machines is now available to stream on Channel 4 (see trailer).

A youth-led debate organised by Durham Youth Council on whether social media should be banned for young people takes place on Tuesday 17 March 10:00am, online.

The UK government plans to introduce a digital complaints system to improve how schools handle parental concerns and strengthen relationships between parents and school leaders. ParentKind reports that more than five million complaints were submitted by parents in the last year alone. A Schools Week investigation revealed the scale of pressure this places on headteachers, with many losing sleep over the volume of complaints – a significant number of which are believed to have been generated using AI.

England’s largest exam board has warned Ofqual that it risks “moving too slowly” on the rollout of digital exams, reports the TES.

London Centric is a brilliant news resource investigating varied aspects of London from dodgy billionaire landlords and the risks of Lime bikes to snail farms in office blocks (yes, really). This week it’s turned its attention to the so-called ‘school wars’, spread through social media algorithms and built up into a social panic despite the Met Police saying they still haven’t encountered a single incident relating to the viral red vs blue school wars trend.

“Even some London teachers are quietly rolling their eyes. ‘It made me laugh a bit that the social media posts are like ‘bring compasses, a ruler, a metal comb’,’ said one. ‘My students can barely manage to equip themselves with a black biro. Plus, if we’re being real, everyone knows that ‘perilously sharp-edged broken protractor’ would be the true weapon of choice.’”

We’re reading, listening, watching…

The AI lottery

Last week we featured a great post from Ben Ansell which referenced Jorge Luis Borges’s story The Library of Babel in relation to AI. Now Doug Belshaw has picked up the Borges thread, speculating that the events the writer describes in The Lottery in Babylon are less speculative fiction and more 21-century reality when it comes to AI.

Teacher v chatbot: my journey into the classroom in the age of AI

Peter C Baker, a freelance writer and novelist in the US, retrained as an English teacher and has chronicled his exploration of what AI means for English teaching and his oscillation between AI‑rejection and AI‑enthusiasm. Ultimately he advocates a measured blend that preserves AI‑free spaces for deep reading, writing and discussion, while harnessing AI’s feedback capabilities under clear instructional guidelines. His model aims to retain the “friction” essential for learning while equipping students with the digital literacy they’ll need beyond school.

Understanding ‘good enough’ parenting and toddlers’ tech use

Janet Goodall, researcher and Professor of Education at Swansea University’s School of Social Science, discusses all aspects of parental engagement and her recent research into early years’ tech use on ParentZone’s Tech Shock podcast.

Give it a try

The Fish Doorbell

The Fish Doorbell is back! Help fish spawn in Utrecht by ringing the bell and alerting the lock keeper that fish are waiting to get through.

Connected Learning is by Sarah Horrocks and Michelle Pauli

Hi just to clarify -- my article does *not* end with advocacy for AI feedback tools in schools.

Thank you team! The fish doorbell is worth the entire post in and of itself, which is its usual blend of insightful critique and curation of AI and education. And having just the one item at the end for me to try is perfect! 🤩