No 92: Digital learning news and views

DfE tech in schools report, DigComp 3.0, deepfakes, tiny robots, Appvent Calendar and much more...

What’s happening

Straight to the news and reviews this week, with just a couple of notes about what we’re both up to in the run up to the Christmas break.

Sarah is preparing to host Dutch teachers and headteachers who are coming to look at inclusion practice in London during BETT week. She’s also prepping her talk on design thinking and playful learning with her Danish Co-Create colleagues and working on information and AI literacy CPD for the project.

Michelle is busy with the next phase of work on the DfE’s support materials on the safe and effective use of AI in schools. In updating the resources, it’s incredible how much has already moved on in the AI landscape in just the six months since the toolkits were published back in June. We’ve been getting feedback from teachers and school leaders about how the resources are being used in staff CPD. A common narrative is the wide disparity in AI knowledge and use across staff before the CPD, where some teachers still have very little knowledge of LLMs and how they work, and others are racing ahead – and both camps need guidance on critical areas such as protecting students’ data and intellectual property. This new phase will also see a broadening out to encompass school staff beyond teachers and leaders.

We’d love to hear what you’re up to, an account from your own practice, your thoughts on digital developments in education or any tips or feedback – please drop us a line on email or catch us on LinkedIn.

AI roundup

Trojan horse trap

This Huffpost article about a professor who ‘set a trap’ for his ‘cheating students’ (by inserting hidden text into an assignment’s directions that the students couldn’t see but that ChatGPT could) got our hackles up when we first read it due to the lack of integrity - academic or otherwise - all round. Now Darren Coxon has responded in a LinkedIn post, also arguing that the educator was wrong to focus his attention on the result, not the cause:

“He does not once ask himself why so many students took the AI shortcut. The essay sounds dull and lacking in originality. It’s exactly the sort of essay that students will take a look at and think ‘what’s the point?’.”

When delegation becomes abdication

Rose Luckin has concerns about the ‘agentic revolution’ and the speed with which we’re moving from AI that assists your thinking to AI that acts on your behalf:

“Three years ago, AI was a tool you queried. The cognitive loop remained intact: you were still thinking; the AI was helping you think.

Two years ago, AI became a collaborator: drafting, revising, iterating. The loop stretched but did not break.

Now, AI is becoming an agent. It plans, decides, and acts.”

She warns that seamless AI interfaces discourage people from asking critical questions about how AI works. As AI evolves from tool to autonomous agent, most users lack the understanding to judge what tasks are safe to delegate. Cognitive offloading can enhance productivity but uninformed delegation risks serious consequences, and so we urgently need widespread AI education so people can make informed choices about trust, verification and which decisions require human judgment before agentic AI becomes ubiquitous.

Pair with this Coach not crutch academic paper, however, which suggests that participants randomly assigned to practice writing with access to an AI tool improved more on a writing test one day later compared to writers assigned to practice without AI – so AI can act as a learning tool rather than a cognitive offloader. Philippa Hardman takes an in-depth look at the research and concludes that it doesn’t “disprove” concerns about AI harming learning but it does sharpen and add nuance our understanding:

Rose Luckin is also holding a one-hour online session on what school governors need to know about AI for early risers at 07:30 on 16 December

Cornwall AI in Education Summit 2026

TecGirls is running an AI best practice all-day event for teachers from all subjects at all levels on 5 Jan in Truro, Cornwall.

Quick links

DfE has published its Technology in Schools survey: 2024 to 2025 research report. Highlights include:

Cyber security is an area of growing success – 85% of leaders provided cyber security training to staff in the last year, a significant increase from 73% in 2023.

Digital maturity: just over half of all schools (55%) had a strategy in place and three quarters (77%) either had one in place or its development was in progress. Having any form of digital technology strategy was more common in secondary settings (70%) than in primaries (52%).

But the proportion of schools with a formal plan to monitor the effectiveness of technology has declined. Only 35% of leaders have any mechanism to monitor effectiveness (down from 41% in 2023)

Many leaders and teachers reflected positively on the impact of technology on staff workload against a comparison point of the start of the 2021/22 academic year. The proportion of teachers reporting that technology had reduced their workload was smaller, but it was still noted by more than four-in-ten (43%). However, a near equal proportion (41%) said it had not made a difference, and 13% said it had increased staff workload.

AI: less than half of teachers reported using generative AI (GenAI) for school activities (44%). GenAI was most often being used for lesson planning (35% of all teachers using it at least sometimes for this activity), followed by for administration activities (20%) and for giving written feedback (15%). GenAI tools were rarely being used for delivering a live lesson (7% of teachers using at least sometimes) and / or for marking (5%).

Ofsted’s chief Inspector, Martyn Oliver, has some words about social media and smartphones in his annual report. He warns that “influence of social media, whether by chipping away at attention spans and eroding the necessary patience for learning, or by promoting disrespectful attitudes and behaviours, clearly plays a part” in disruptive behaviour. He suggests that schools should be offering pupils “sanctuary” from their mobile phones once the school gates close.

Teachers at a Lancashire school have gone on strike to protest against the use of a Devon-based “virtual teacher”, reports BBC news. Since September, top-set math students in years 9-11 have been instructed by the remote teacher alongside a regular classroom teacher. The National Education Union has demanded an end to this practice.

Computing at School will be running an event on 4 February 2026, with Becci Peters, on the proposed content for the new computing GCSE.

In preparation for the Irish Learning Technology Association Conference in June 2026, Civics of Technology is sharing thought-provoking Google slides of prompts for secondary students to respond to quotes from the past 150 years about technology and society. For example; “We shape our tools and thereafter our tools shape us.” (John Culkin, 1967).

DigComp 3.0 is the fifth version of the European Digital Competence Framework developed by the European Commission. It describes what is needed to be digitally competent in today’s society, and supports the development of digital competence among individuals of all ages. It covers a wide range of skills levels, from basic to highly advanced, and offers a stable reference point in a rapidly evolving digital technological landscape.

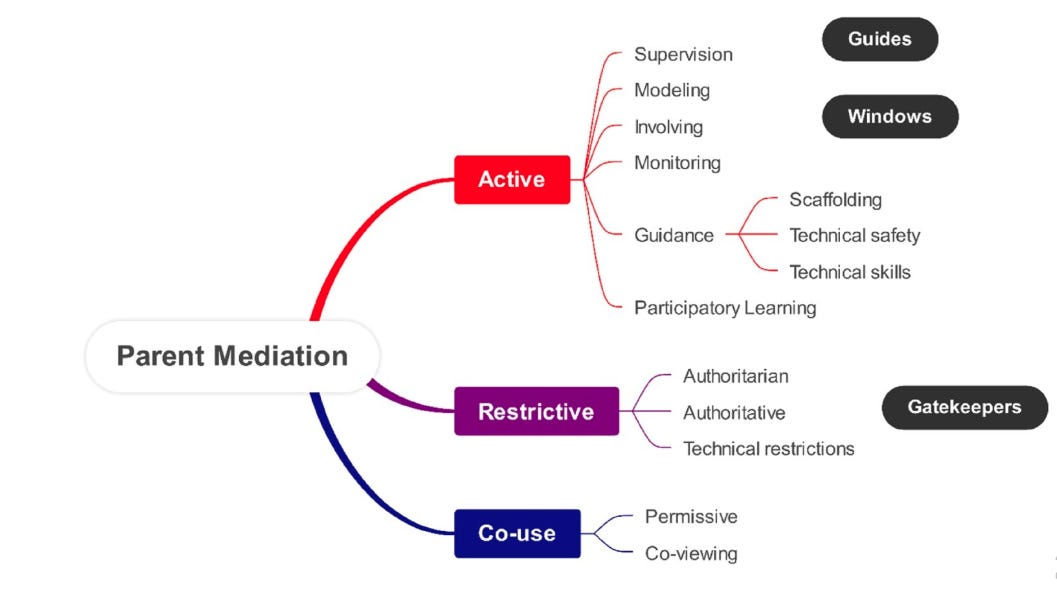

We’ve followed the nuanced research of Rosie Flewitt into digital, young children and families for many years. Her latest paper, Parental mediation of very young children’s early experiences with digital media at home explores issues surrounding parental mediation of the use of digital devices by children aged from birth to three years. The paper is part of a much larger project, reporting on issues relating to very young children’s digital rights, digital play, parental attitudes towards very young children’s use of digital devices, and language and literacy learning with digital media.

Pair the early years research paper with this LSE blog by Robin Neuhaus which explains why not all screen time is equal. Neuhaus and his colleague Erin O’Connor analysed online articles about screen time research; their study found that articles that portrayed screen time as harmful spread much faster than those with balanced or mixed findings.

We’re reading, listening, watching…

The Reith Lectures

We tend to recommend the annual Reith Lectures and this year is no exception. In his call for a ‘moral revolution’, Dutch historian Rutger Bregman discusses the impact of technology, AI and social media.

Bregman identifies a specific ideological strain among tech elites who wish to replace democracy with a “techno-monarchy” led by a CEO with absolute power.

And he warns of a “resurgence of fascism” within the technology sector itself. He recounts attending a Silicon Valley conference where a “tech bro” told him, without irony, that “we should get a little fascy”. While he cites reasons for optimism and hope that groups of committed citizens can still change the world for the better, he also cautions;

“It’s absolutely a problem that a lot of online activism today is quite fleeting. It’s easy to send out a tweet and have thousands of people in the streets. But what we’ve seen a lot in the last 10 to 15 years is that many of these movements also collapse. What I’ve learned from the abolitionists and also the suffragettes who fought for the women’s right to vote is the need for perseverance.”

The rise of deepfake pornography in schools

The Guardian has a disturbing read about the rise of deepfake pornography in schools and the impact on the pupils involved, along with a range of opinions on how best to tackle the issue. As one teacher puts it,

“We should all try to imagine how we would have felt 20 years ago if someone had suggested inventing a handheld device which could be used to create realistic pornographic material that featured actual people that you know in real life. And then they’d suggested giving one of these devices to all of our children. Because that’s basically where we are now. We’re letting these things become ‘normal’ on our watch.”

RIP computer keyboard 1964-2025

This conversation is focused on a specific product but it’s an interesting investigation into a post-keyboard future where voice becomes the primary way humans interact with computers. Tanay Kothari went from building an early voice assistant as a child to launching a global voice product. He talks about the removal of typing’s cognitive friction, the importance of AI accessibility, and the nuance of emotional tone digitally. He argues that speaking to computers might be a more ‘human’ experience than typing ever was.

Deepfakes, disinformation and a trundle towards ‘reality apathy’?

This week’s Tech Shock podcast episode from Parent Zone explores deepfakes, relationship chatbots and a reality we might describe as ‘synthetic’.

A tiny robot somersaulting

Inspired by a fruit fly and with a neural network.

Give it a try

Appvent Calendar

Mark Anderson has launched his Appvent Calendar for 2025 with 24 days of recommended tools from guests in the education technology community.

Connected Learning is by Sarah Horrocks and Michelle Pauli

Thanks Michelle and Sarah for another excellent mix of curation and critique! That Huff Post article made me furious when I read it, because it reflects the attitudes I have been seeing across both institutions and Reddit chats - university educators trying to find way to 'catch students out'. But it is most upsetting because it points to a wider issue of educators trying to police students rather than engage in dialogue. AI is just another excuse for them to do this rather than to look at their own practice. 😢