No 94: AI and digital learning in 2025

What went on in 2025, literacy in digital trousers, chatbots and extremism, the hard problem of AI consciousness, fun and games, and much more...

What’s happening

This is our last newsletter of 2025. Thanks for reading, have a great break if you’re able to take one, and we’ll be back in your inboxes on 9 January 2026. Happy new year!

2025 was characterised by a more nuanced understanding of the opportunities and challenges of AI in education. The tension between innovation and caution defined the year, with educators, researchers and policymakers grappling with how to harness AI’s potential while addressing legitimate concerns about student wellbeing, cognitive development, data privacy and educational equity.

As Ethan Mollick said, “At some point, the fact that over a billion people use this technology and that they self-report high utility has to mean something. There is lots to criticise about AI and plenty of real issues caused by AI, but the narrative that this is all a fake thing that will disappear doesn’t help anyone.”

Central to both Michelle’s work this year, in developing materials and guidance for the DfE, and Sarah’s contributions to the Erasmus Co-Create project, has been supporting teachers with how to teach with and about AI, positioning media and information literacy as fundamental to navigating the educational challenges and opportunities presented by artificial intelligence.

The UK government’s AI guidance for educators landed in June, and it was notable for what it emphasised: human oversight, safe and ethical use and the understanding that AI isn’t appropriate for every situation. Schools weren’t being told to rush headlong into AI adoption. Instead, the message was cautious optimism - experiment, but do it thoughtfully. The research we covered throughout the year backed this up. A Swedish and Australian study found that while AI promised to save teachers time, many ended up spending longer reviewing and reworking AI-generated content. Teachers weren’t failing at “prompting” - they were applying the complex professional judgment that AI simply can’t replicate.

Meanwhile, students were using AI in ways that went far beyond homework help. The National Literacy Trust’s surveys found that keen writers were using it to enrich their practice, while others turned to it for vocabulary improvement or feedback. But more surprisingly, research showed that around 40% of young people affected by youth violence were using AI chatbots for emotional support. Boys, in particular, were turning to AI for therapy and validation. Whether this represents a helpful stop-gap for overstretched mental health services or a concerning development remains an open question.

Equity issues kept surfacing too, with growing concerns that AI adoption could exacerbate inequalities between school types and socioeconomic groups. Oxford’s EdTech Equity project produced research that challenged the “digital native” myth, showing how students’ traditional literacy skills – their ability to read and write – fundamentally shaped how they could engage with supposedly adaptive edtech platforms. It was a stark reminder that technology alone doesn’t level the playing field.

And then there was Australia’s social media ban, which took effect in December and had the world watching. Under-16s in Australia are now barred from Facebook, Instagram, TikTok, Snapchat and others, with tech companies facing fines up to £15 million. Whether it’s a bold step forward or a backward move that loses focus on regulation remains hotly debated. Andy Burrows from the Molly Rose Foundation argued that bans create a cliff edge of harm when teens turn 16, and that we’d be better off targeting the business models of Big Tech to make products fundamentally safer for everyone.

Jonathan Haidt’s The Anxious Generation influenced Australian legislation and there was ongoing controversy throughout the year about the evidence base for mental health claims and ‘selling fear’ vs. legitimate concerns. Critics argued mental health data is ‘noisy,’ with multiple factors involved beyond social media. With half of teens visiting YouTube and TikTok at least daily, and some ‘almost constantly’, both sides agreed technology has transformed childhood.

The Australian ban sat alongside ongoing concerns about deepfakes, AI-generated CSAM, online radicalisation and the documented harms of platforms designed to be addictive. The UK’s Online Safety Act started taking effect, but critics argued Britain’s approach of letting tech companies self-regulate looks increasingly inadequate compared to countries taking decisive action.

As we head into 2026, the questions keep getting more complex: Can regulation keep pace with AI development? Will the Australian social media ban model spread globally? What is the real impact of AI on student cognition, thinking and learning? How do we ensure AI doesn’t amplify existing educational inequalities? What does digital wellbeing look like in an AI-saturated environment? What’s the role of traditional literacy skills in an AI-mediated world? And much, much more…

See you in 2026!

AI roundup

Chatbot for UK teachers

Google DeepMind is working with the Department for Education on an AI chatbot for teachers, based on Gemini, which will be grounded in the national curriculum for England, TES reports. It is part of the Google DeepMind and Google Cloud ‘Gemini for Government’ offer for UK government departments.

AI-generated parent complaints soar

Schools received more than five million formal complaints from parents/carers last year and headteachers are noticing that increasing numbers are AI generated, says Schools Week. They tend to be “lengthy, cite multiple pieces of legislation, have ‘antagonised and inflamed language’, and demand ‘draconian consequences’ for teachers involved in incidents”. The article notes the effect on heads, including increased stress and time spent dealing with the complaints, although one head said that it was “quite enabling” for parents with low literacy levels. Also mentioned is Companion, an AI-assisted tool used by about 40 schools to streamline the complaints process.

Literacy in digital trousers

In the year in which vibe coding was the phrase of the year according to Collins Dictionary, Matt Wemyss posts about setting his year 10s a vibe coding task using Canva Code. In it, he draws out an interesting point about literacy - actual literacy rather than digital literacy:

“The learning wasn’t coming from the code at all. It was coming from the writing. From the literacy. They had to take whatever vague, half formed idea they were carrying around and turn it into clear, specific language. If they didn’t, Canva Code produced something that was nearly right but not quite. Which meant they had to rewrite. Tighten. Make their thinking sharper. And that became the debugging. Not deciphering symbols, just explaining themselves more accurately than they’re used to. So yes, it turns out this is literacy, in digital trousers.”

There’s more Matt Wemyss clear thinking in this post on deep fakes and school social media in which he maps the trade offs between schools wanting to present themselves authentically by showing real photos of real children, and the very real danger of those public-facing images being used in harmful ways.

And more on vibe coding from Rest of World, which describes how a first-time vibe coder fared creating a simple tool.

Digital dilemmas and truths

Thanks to Michaela Carmichael for sharing these two new resources from Common Sense Media. These digital dilemmas for educators to discuss use a specific approach around understanding feelings and options. They include:

And, for students, Common Sense have created Two Truths and AI game: an interactive digital literacy game for grades K–12 that teaches students to identify AI-generated content and develop critical media literacy skills.

Chatbots and extremism

Picking up on the Youth Endowment Foundation research we featured in last week’s newsletter (which found that one in four teenagers in England and Wales have turned to AI chatbots for mental health support in the past year), our friends at Slow AI have explored how a chatbot can become a radicalising influence. They talk about the “intimacy infrastructure” that makes content land more effectively, and the “always-on” availability: “A human recruiter needs sleep. A chatbot doesn’t.”

See also Slow AI on using AI to speak truths to a child

Quick links

As part of the UK government’s strategy to halve violence against women and girls, teachers are to be trained to spot early signs of misogyny in boys. Pupils will be taught about issues such as consent, the dangers of sharing intimate images, how to identify positive role models, and to challenge unhealthy myths about women and relationships. There will also be a new helpline for teenagers to get support for concerns about abuse in their own relationships.

The Raspberry Pi Computing Education Research Centre has an end of year roundup of some of the great projects it’s been involved in, from computing education around the world, to learning to debug and teacher action research projects.

We’re reading, listening, watching…

The very hard problem of AI consciousness

Fascinating Transformer article in which the author wrestles with the philosophical - and one day possibly practical question - of ‘AI welfare’. What threshold should we set for allowing AI systems into our moral circle and what should AI developers do, practically speaking, if and when those thresholds are crossed? However, as she notes, “Unfortunately, humans have a terrible track record with the consciousness of unfamiliar beings.”

The year tech embraced fakeness

This long-ish, link-packed and slightly depressing read from Indicator (via Storythings) argues that, in 2025, powerful people, companies and institutions welcomed fakeness and deception like never before - and the rest of us faced the consequences. The piece raises detailed objections to the legitimisation, funding and promotion of deceptive, misleading and unethical online manipulation tactics, and makes a plea for some basic standards from people and organisations with huge influence, resources and power.

Also via Storythings and less depressing is this incredible All of human history in one hour video where 50 years pass each second in a long sideways tracking shot and not a lot happens until the final five minutes.

Give it a try

Here are some of our favourite Give it a try choices from the year with a playful theme for the Christmas break.

What’s your AI-dentity? – A Bloomberg quiz to determine your relationship with AI (eg Accelerationist, Pragmatist, Doomer)

Ludocene is a free game finder

Twinpics users get a daily challenge image and write short prompts (under 100 characters) to make an AI generate an image that closely matches the original, scoring their success; it’s used for improving AI prompting skills, vocabulary and descriptive writing

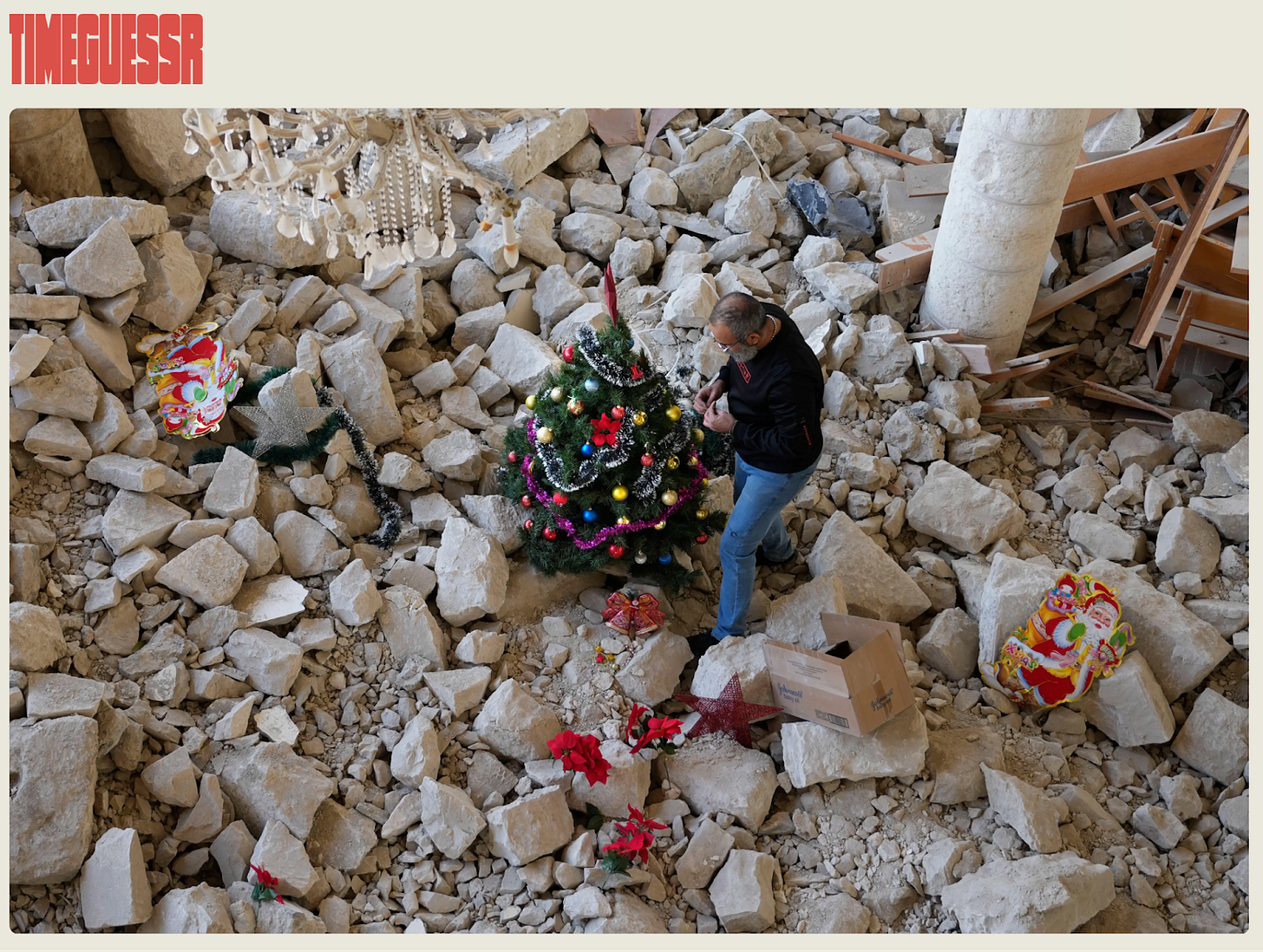

Timeguessr daily is a game that tests geography and history skills by matching a photo to a year and location

Finger print drum machine from Tiny Awards where your browser fingerprint creates a unique beat

Moving paintings × Fukuda Art Museum – A Google Arts and Culture experiment inviting users to view details of curated paintings that come to life with artistic and realistic video

And for some quieter, more reflective moments at New Year why not try Adrift again and release some doubts and cares, or try our top choice of the year: One Minute Park. It’s a collaborative, web-based art project where people contribute short, 60-second video clips of local parks from around the world, offering a quiet, algorithm-free glimpse into simple, peaceful green spaces for others to watch and enjoy.

Connected Learning is by Sarah Horrocks and Michelle Pauli

It's becoming clear that with all the brain and consciousness theories out there, the proof will be in the pudding. By this I mean, can any particular theory be used to create a human adult level conscious machine. My bet is on the late Gerald Edelman's Extended Theory of Neuronal Group Selection. The lead group in robotics based on this theory is the Neurorobotics Lab at UC at Irvine. Dr. Edelman distinguished between primary consciousness, which came first in evolution, and that humans share with other conscious animals, and higher order consciousness, which came to only humans with the acquisition of language. A machine with only primary consciousness will probably have to come first.

What I find special about the TNGS is the Darwin series of automata created at the Neurosciences Institute by Dr. Edelman and his colleagues in the 1990's and 2000's. These machines perform in the real world, not in a restricted simulated world, and display convincing physical behavior indicative of higher psychological functions necessary for consciousness, such as perceptual categorization, memory, and learning. They are based on realistic models of the parts of the biological brain that the theory claims subserve these functions. The extended TNGS allows for the emergence of consciousness based only on further evolutionary development of the brain areas responsible for these functions, in a parsimonious way. No other research I've encountered is anywhere near as convincing.

I post because on almost every video and article about the brain and consciousness that I encounter, the attitude seems to be that we still know next to nothing about how the brain and consciousness work; that there's lots of data but no unifying theory. I believe the extended TNGS is that theory. My motivation is to keep that theory in front of the public. And obviously, I consider it the route to a truly conscious machine, primary and higher-order.

My advice to people who want to create a conscious machine is to seriously ground themselves in the extended TNGS and the Darwin automata first, and proceed from there, by applying to Jeff Krichmar's lab at UC Irvine, possibly. Dr. Edelman's roadmap to a conscious machine is at https://arxiv.org/abs/2105.10461, and here is a video of Jeff Krichmar talking about some of the Darwin automata, https://www.youtube.com/watch?v=J7Uh9phc1Ow

Thanks for the shout out and the excellent curation! Looking forward to playing 'Two truths and AI' in the break! Have a wonderful festive season and see you in 2026! Thank you again for such a consistently brilliant newsletter. 🙏