The carbon cost of using AI – and how to reduce it

New research on the environmental impact of AI, is Google's Gemini all it's cracked up to be, Hour of Code, a round up of digital news and resources, and an AI PDF summariser to try

What’s been happening

With COP28 underway, some timely attention has been paid to the impact of AI on the planet – an issue that is too often overlooked. Hugging Face has conducted important research into the carbon emissions caused by using LLMs – and you might be surprised at the findings.

The study is the first to look at the carbon emissions of using LLMs rather than the environmental cost of training them – which we already know is huge. The researchers ran 10 common AI tasks across 88 different models and found that generating a single image using a powerful AI model takes as much energy as fully charging a smartphone. They also found that using large generative models to create outputs was far more energy intensive than using smaller AI models tailored for specific tasks. The researchers hope their work will encourage people to be choosier about when they use generative AI and opt for more specialised, less carbon-intensive models where possible.

In the UK education sector, the expert body on IT sustainability (among many other things related to digital) is Jisc. Last year it published a report by Scott Stonham, Exploring digital carbon footprints, on the hidden environmental cost of the digital revolution and the steps universities and colleges can take to address it.

Although the report is focused on the tertiary sector, there’s a lot in there for schools and individuals. For example, there is advice on choosing video call providers (Teams, Zoom, Google Meet etc) based on their carbon emissions. It also notes that one of the quickest and possibly easiest areas for improvement is email signatures. Institutions should educate staff and students on the need to minimise emails and unnecessary attachments, including images in signatures and remove unnecessary images. Jisc also runs the National Centre for AI. So far it has published this blog post on the environmental impact of AI but we look forward to hearing more from them on this critical issue.

AI roundup

Enter, Gemini

Just when you might be getting used to Microsoft’s Copilot as your go-to chatbot, this week Google announced the introduction of Gemini – its most capable AI LLM. In its Platformer newsletter, Casey Newton described how Gemini could analyse the contents of a picture and answer questions about it, or create an image out of a text prompt. Interestingly for the education sector, Newton adds, “During a briefing on Tuesday, a Google executive uploaded a photo of some maths homework in which the student had shown their calculations leading up to the final answer. Gemini was able to identify at which step in the student’s process they had gone awry, and explained their mistake and how to answer the question correctly.”

Bard will now use a fine-tuned version of Gemini Pro for its biggest upgrade yet. However, Google’s demonstration of Gemini quite all that it seemed? Check out Gary Marcus’s post for a more critical take.

Well worth reading is Ethan Mollick on which LLM to use, especially given Chat-GPT 4 is not currently accepting new subs.

AI Pedagogy Project

The AI Pedagogy Project from metaLAB (at) Harvard is a collection of assignments and materials designed to help educators engage their students in conversations about the capabilities and limitations of AI. It includes an interactive guide (with AI primer, LLM tutorial and resources) and an evolving set of assignments sourced from educators around the world. (Via More Than Robots)

Is the Turing Test dead?

Researchers have proposed a new kind of intelligence test that treats machines as participants of a psychological study to determine how closely their reasoning skills match those of human beings.

Quick links

It’s Hour of Code this week and alongside all the usual fun activities designed to introduce computer science to learners of all skill levels (such as Dance Party, Tickle Monster and Nasa’s Space Jam), there’s a focus on AI with a How Ai Works video series and AI 101 for Educators.

There are three things parents should teach children around AI, according to these Australian academics: be critical, watch out for chatbots, and be careful with images, audio and video.

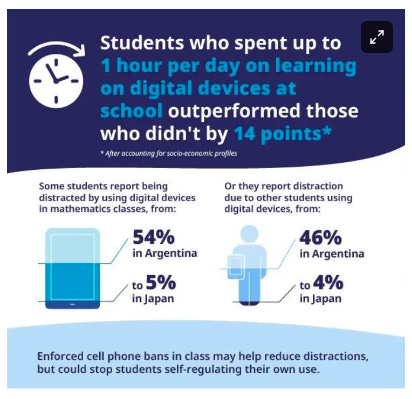

The PISA 2022 Insights report has been released, and the European Edtech Alliance has collated some of the key points related to digital education from the report. The Alliance highlights that moderate use of digital devices in school is related to higher performance – up to one hour a day on digital devices for learning activities led to an increase of scores in mathematics by 14 points. However, the relationship with devices differs greatly according to the purpose of use. The differentiation made in the study is between the use for learning activities and “leisure use”. This is an important distinction that needs to be further explored so that we can better understand successful pedagogical practices related to device use and the relationship with relevant resources and tools.

We’re listening to, reading, watching…

Two fascinating podcast episodes on NYT’s Ezra Klein show this week. First, a review of developments in AI and looking at what to expect in 2024. Plus, an interesting discussion about reading – how our brains process information differently when we’re reading on a Kindle or a laptop as opposed to a physical book, how exposure to such an abundance of information is rewiring our brains and reshaping our society.

Monica ‘Brick Lane’ Ali asks if she would use AI to write her novels (spoiler: she wouldn’t) but ‘Aidan Marchine’ (novelist and journalist Stephen Marche) has given it a go with Death of an Author, a murder mystery created using three AI programmes. It has ‘metafictonal zest’, says the New York Times…

As we mentioned last week, on Saturday Sarah will be chairing a conversation with Arthur I Miller. Get a taster with some examples of creative works made using AI.

Give it a try

PopAI

We’ve been trying out various AI document and PDF summarisers recently. There are benefits and drawbacks with all of them but they can do a great job. This post suggests a few with their pros and cons. We gave PopAI a try and liked the way it summarised PDFs and allowed us to ‘chat with the images’ in reports.

Connected Learning is by Sarah Horrocks and Michelle Pauli